My Current Coding Setup: Async Software Engineering

I’ve been evolving my coding workflow to more async agentic coding: checking in on how the agents are doing on specific tasks and guiding them when necessary. It’s very similar to how one would manage a human software engineering team. I’ll give some example tasks below and describe how you can set this up for yourself.

📱 Simple one-shot tasks: Snippy

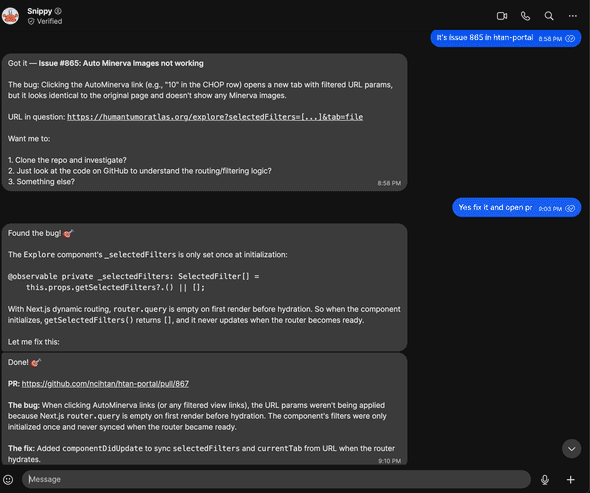

For quick, self-contained stuff I use Snippy—my OpenClaw assistant. I fire off a request from my phone and it handles things like small bug fixes, formatting changes, or minor refactors autonomously. It opens a PR and I review it when I get a chance. Here’s an example where a collaborator emailed about something that needed to be fixed in our HTAN portal—I just told Snippy to fix it via Signal and it immediately submitted a fix:

Some more examples:

- I needed to remove a banner from our website. I messaged the agent and immediately got a PR to do so.

- I received a ton of PRs to fix an issue for cBioPortal, so I asked it to merge them into one combining the best of each (see PR)

💻 Complex tasks: VPS + tmux

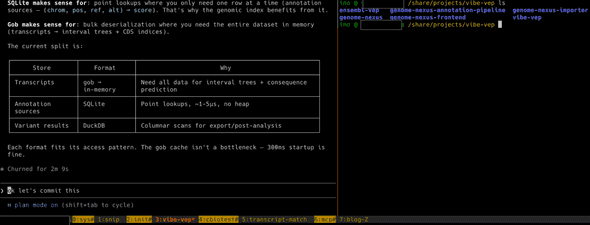

For anything more involved I connect to a VPS—from my phone using Termux with mosh, or from my MacBook via SSH. On the server I use tmux with one window per project, each running a Claude Code session. Here’s what my current tmux session looks like:

- 0:sys — System install stuff and server health management

- 1:snip — Chat interface to Snippy, so beyond phone and email I can also talk to it directly on the server

- 2:init — Starting new projects. I organize my work by projects, where a project might span multiple repos

- 3:vibe-vep — Working on vibe-vep, a variant effect predictor. This project spans multiple repos as you can see in the screenshot

- 4:cbiotest — Dealing with flaky tests in cBioPortal

- 5:transcript-match — A transcript alignment tool

- 6:mcp — The MCP project for cBioPortal

- 7:blog — This blog post

The whole thing is very async: give Claude Code a bunch of tasks, check back later to review the plan, approve or redirect, and move on to the next window. It’s a fundamentally different rhythm from the old “sit down and write code for 4 hours” mode—more like managing a small team that works fast but needs guidance. I’m coding way more from my phone now.

How to set this up

🤖 Simple tasks: OpenClaw

OpenClaw lets you run your own AI assistant that you can message from anywhere—WhatsApp, Telegram, Slack, etc. I set mine up with a coding agent skill so it can open PRs on GitHub. The VPS setup is pretty much the same as described in the complex tasks section below, except you’ll want to create a separate user with more limited permissions, a separate Google account, and a separate GitHub account to keep things isolated.

Fair warning: I’d only recommend this to tinkerers with some sysadmin experience right now. There are many potential security issues when you’re giving an AI agent access to all kinds of services. Prompt injection becomes a major concern. I’m sure this will be available to more people in a more secure manner soon.

📟 Complex tasks: VPS + tmux + mosh

- Get a VPS — I use OVHcloud, but any provider works. You want enough RAM for your coding agents to run comfortably.

- Set up a user and SSH — Create a non-root user, set up SSH keys, and configure your firewall. Same as you’d do for any new server.

- Install your dependencies — Git, Node, Python, Docker, whatever your projects need. I keep a dotfiles repo to help bootstrap new machines.

- Install tmux — This is what lets you keep multiple sessions alive. One window per project.

- Install mosh — Way more resilient than plain SSH, especially on flaky mobile connections.

- Install your coding agent(s) — Claude Code, GitHub Copilot CLI, Codex, Gemini CLI, whichever you prefer.

- Connect from your phone — On Android I use Termux with mosh. One nice trick: you can talk to Claude Code using Gboard’s dictation. In Termux you need to swipe left on the extra keys row to get to the regular keyboard, voice input works surprisingly well for giving instructions.

Tips and tricks

🔄 Extending sessions with the Ralph loop

One thing with AI coding agents is that they tend to stop and wait for input. The Ralph loop helps with that—it catches when Claude Code tries to exit and feeds the prompt back in, so it keeps iterating on the task. Great for longer jobs where you want the agent to just keep going. My longest unsupervised run so far has been about an hour. Hoping to get to the level of day long runs at some point (tips anyone?).

🛠️ Configure your own workflow

There are many ways to do this async style of software engineering—the various coding assistant integrations available via Slack (like Claude Code in Slack), assigning issues to GitHub Copilot directly in the GitHub UI, using Claude Code on the web, and more. Each has its own pros and cons. My setup requires a lot of configuration and might not be ideal for others. I’d recommend experimenting to figure out what works best for you.

🔮 This is constantly evolving

What requires async interaction today might be near-instant tomorrow. Take Taalas for example—they’re literally printing AI models directly onto silicon chips, running Llama 3.1 8B at 17,000 tokens per second. What currently takes minutes of back-and-forth could happen in the blink of an eye.

That said, even as individual interactions speed up, we’ll likely continue working with many parallel async processes with humans in the loop. The AI does the work, you review. The AI proposes a plan, you approve. Figuring out how this new way of working fits into your day-to-day—how to manage multiple agents, when to check in, what to delegate—that’s becoming a skill in itself.